- Services

Technology Capabilities

Technology Capabilities- Product Strategy & Experience DesignDefine software-driven value chains, create purposeful interactions, and develop new segments and offerings

- Digital Business TransformationAdvance your digital transformation journey.

- Intelligence EngineeringLeverage data and AI to transform products, operations, and outcomes.

- Software Product EngineeringCreate high-value products faster with AI-powered and human-driven engineering.

- Technology ModernizationTackle technology modernization with approaches that reduce risk and maximize impact.

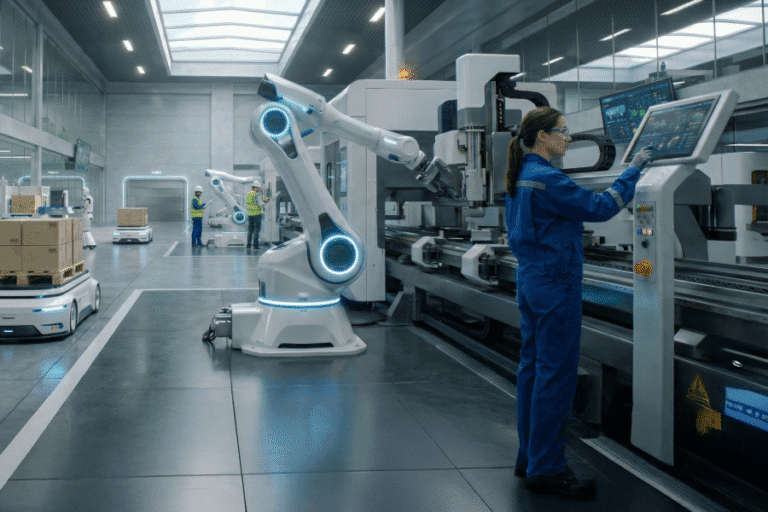

- Embedded Engineering & IT/OT TransformationDevelop embedded software and hardware. Build IoT and IT/OT solutions.

- Industries

- GlobalLogic VelocityAI

- Insights

Case StudiesGlobalLogicEngineering Enterprise Transformation with AI-Powered Software ...

A global ERP provider boosted SDLC productivity by 40%, accelerated delivery, and reduc...

BlogsApril 24, 2026Sameer Tikoo

BlogsApril 24, 2026Sameer TikooThe IQ Era of Connectivity: How AI in Telecom is Redefining ...

Explore how telcos can move from AI experimentation to production-scale intelligent net...

- About

Press ReleaseGlobalLogicApril 22, 2026GlobalLogic Announces Availability of VelocityAI on Google Cloud ...

At Google Cloud Next 2026, GlobalLogic Inc., a Hitachi Group Company and leader in digi...

Media CoverageGlobalLogicMarch 12, 2026

Media CoverageGlobalLogicMarch 12, 2026How AI is Transforming the Future of the Power Grid

In an article for RTO Insider, GlobalLogic’s Yuriy Yuzifovich, Malcolm Hay and Renan Gi...

- Careers

BlogsBlogsBlogsGlobalLogic22 February 2026If You Build Products, You Should Be Using Digital Twins

Digital twins are the foundation of modern product ...

BlogsBlogsBlogsGlobalLogic18 December 2025Physical AI: Bringing Intelligence to the Edge of Action

At GlobalLogic, we’re building systems that don’t just ...

BlogsBlogsBlogsBlogsLoading...

How can I help you?

How can I help you?

Hi there — how can I assist you today?

Explore our services, industries, career opportunities, and more.

Powered by Gemini. GenAI responses may be inaccurate—please verify. By using this chat, you agree to GlobalLogic's Terms of Service and Privacy Policy.