- Services

Technology Capabilities

Technology Capabilities- Product Strategy & Experience DesignDefine software-driven value chains, create purposeful interactions, and develop new segments and offerings

- Digital Business TransformationAdvance your digital transformation journey.

- Intelligence EngineeringLeverage data and AI to transform products, operations, and outcomes.

- Software Product EngineeringCreate high-value products faster with AI-powered and human-driven engineering.

- Technology ModernizationTackle technology modernization with approaches that reduce risk and maximize impact.

- Embedded Engineering & IT/OT TransformationDevelop embedded software and hardware. Build IoT and IT/OT solutions.

- Industries

- GlobalLogic VelocityAI

- Insights

BlogsMarch 19, 2026GlobalLogicRetail’s “Bot Zoo” Problem: Why More AI Tools Won’t Save Your CX

Mid-market retailers don’t need more bots. Learn how Agentic AI and VelocityAI orchestr...

Case StudiesGlobalLogic

Case StudiesGlobalLogicJapanese “Quality” and Global “Speed” ...

Transformation cannot be achieved simply by setting up systems. How did Hitachi Informa...

- About

Media CoverageGlobalLogicMarch 12, 2026How AI is Transforming the Future of the Power Grid

In an article for RTO Insider, GlobalLogic’s Yuriy Yuzifovich, Malcolm Hay and Renan Gi...

RecognitionsGlobalLogicFebruary 2, 2026

RecognitionsGlobalLogicFebruary 2, 2026GlobalLogic Achieves Platinum Partner Status with Boomi

GlobalLogic is now a Boomi Platinum Partner! See how our global scale, Boomi Lab expert...

- Careers

Published on March 1, 2026Pioneering the Next Generation of Intelligent Networks

View all articles Serhiy SemenovDirector, EngineeringView all articles

Serhiy SemenovDirector, EngineeringView all articles Adam RadlinskiPrincipal ArchitectView all articlesMichal CelejewskiView all articlesMykhailo MorhalShareRelated Insights

Adam RadlinskiPrincipal ArchitectView all articlesMichal CelejewskiView all articlesMykhailo MorhalShareRelated Insights GlobalLogic19 March 2026

GlobalLogic19 March 2026 GlobalLogic10 March 2026View All Insights

GlobalLogic10 March 2026View All Insights GlobalLogic23 February 2026

GlobalLogic23 February 2026Let's start engineering impact together

GlobalLogic provides unique experience and expertise at the intersection of data, design, and engineering.

Get in touchAI AlgorithmsAI-Powered SDLCEmbedded Software, Hardware and Silicon SolutionsMLOpsPhysical AICommunications and Network ProvidersEmbedded Engineering and IT/OT TransformationIntelligence Engineering5G Ghost Preamble Detection Using AI

Executive Summary

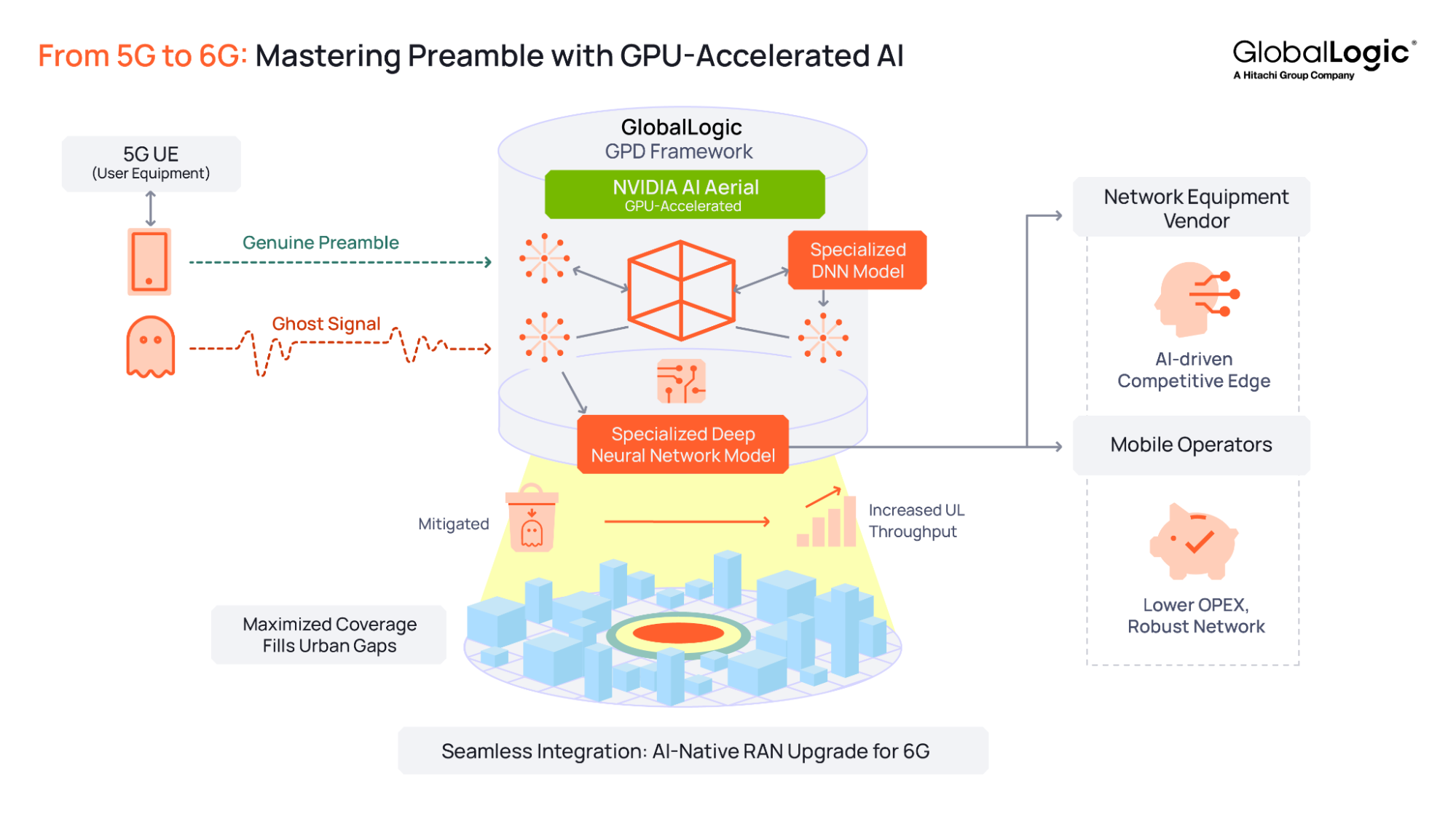

Ghost preambles are a persistent network challenge. When a base station mistakes noise for a valid random access preamble, it wastes uplink resources, raises access thresholds, and reduces coverage.

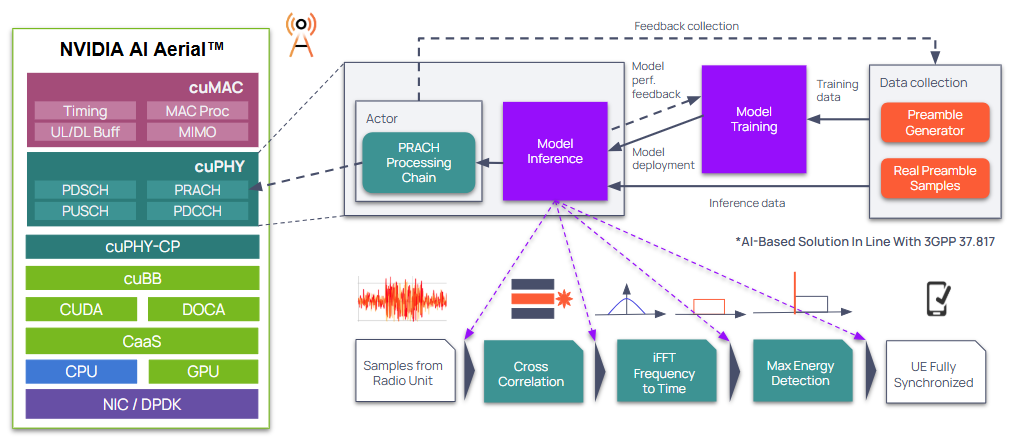

GlobalLogic has developed an AI-powered ghost preamble detection framework that uses GPU-accelerated deep learning inside the 5G RAN signal‑processing chain to detect ghost preambles in real time. The framework integrates into existing 5G stacks for a significant step toward AI-native RAN and future 6G implementations. The solution is built on NVIDIA AI Aerial, extending cuPHY GPU-accelerated L1 stack to integrate deep learning inference directly into the PRACH signal-processing pipeline.

GlobalLogic testing shows that AI-powered ghost preamble detection delivers clear benefits:

- Improves throughput and capacity to deliver up to 2.63 Mbps peak uplink (UL) gains per cell (up to 24.1% gains in lower bandwidth cells).

- Maximizes coverage and efficiency by allowing lower detection thresholds, maximizing cell coverage up to 58.2%, and filling urban gaps without new site deployments.

- Gives network equipment OEMs an AI-driven competitive edge with smarter RAN features that boost performance and support premium software offerings.

- Lowers costs for mobile operators through better spectrum utilization and more robust performance in weak signal and high-density environments.

Leveraging NVIDIA AI Aerial, GlobalLogic has pioneered an AI-RAN solution that moves beyond traditional processing limits — this milestone, validated with VIAVI Solutions, paves the way for the scalability and spectral integrity required for future 6G implementations.

What are Ghost Preambles and Why They Are Troublesome

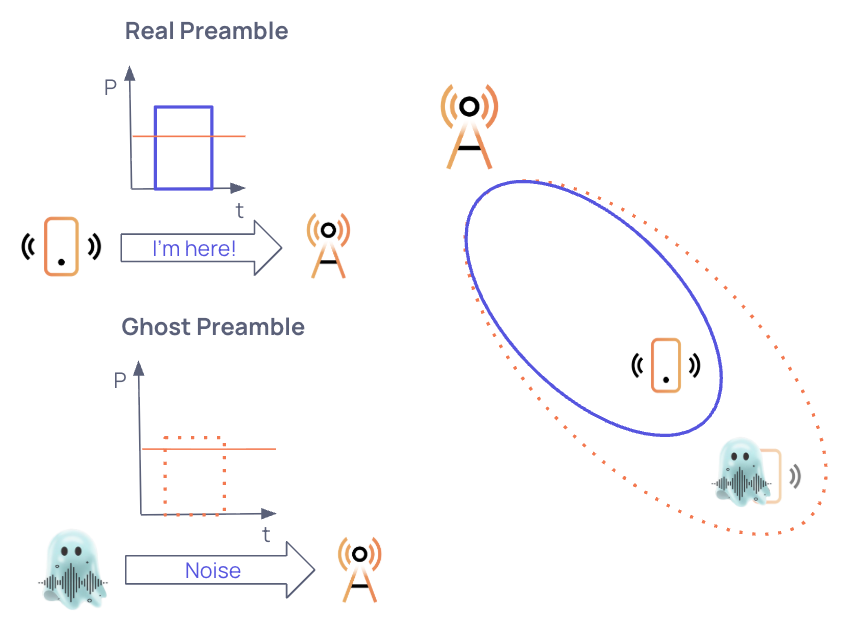

When a device wants to connect to the network it sends a defined preamble sequence to the base station (gNodeB). However, the gNodeB detection algorithms can mistakenly identify noise, inter‑cell interference, or other RF artifacts as valid connection requests, causing a failure known as a “ghost preamble”.

Figure 1. Appearance of “Ghost Preambles” in the network.Ghost preambles create three major challenges:

- They waste scarce radio resources and spectrum, reducing capacity for legitimate users, lowering overall network throughput, and causing inefficient utilization of spectrum.

- They generate unnecessary signaling traffic that diverts resources away from real users and legitimate network operations.

- They require higher access thresholds to avoid false detections, which shrinks effective coverage.

Conventional Preamble Detection Methods

Standard 5G processing identifies connection requests by correlating frequency-domain signals with Zadoff-Chu (ZC) sequences and using an iFFT to switch back to the time domain and then to locate energy peaks within specific time-windows. While this mathematical approach is the industry standard for efficiency, it acts as a “blind” filter; it cannot distinguish between a legitimate low-power signal and high-energy interference. This lack of granularity leads to “ghost preambles” that trigger unnecessary signaling and drain network capacity.

GlobalLogic’s AI-powered Approach to Ghost Preamble Detection (GPD)

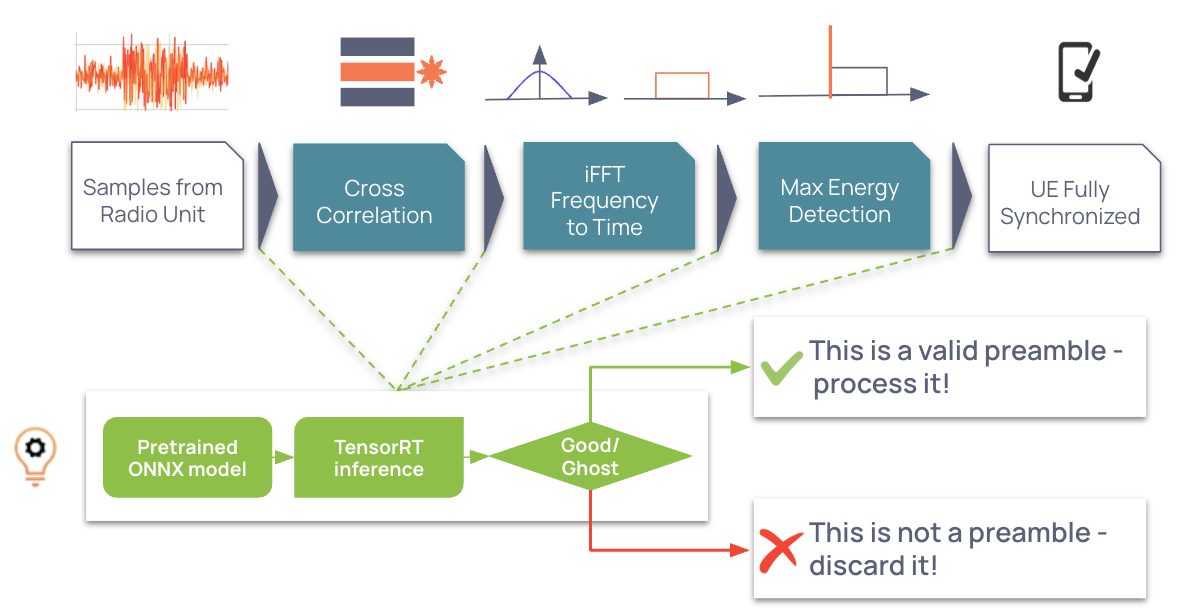

To overcome traditional stochastic limitations, GlobalLogic explored using Deep Neural Networks (DNNs) and GPU accelerated RAN processing to deliver AI model inferencing fast enough to meet L1 timing constraints. These DNNs can evaluate data at each processing step to identify ghost preambles.

Figure 3. AI-powered approach to PRACH processing.The deep learning framework utilizes DCN architectures in four separate deep learning models, each specialized for a different stage of the RAN processing pipeline. The PRACH processing pipeline is used as a functional actor, enabling it to execute real-time inference within the RAN protocol stack. The models were trained on a hybrid dataset of synthetically generated and field trial samples, enabling them to judge whether a signal is real or ghost. If it’s real, it’s processed. If it’s a ghost, it’s discarded.

This approach combines traditional signal processing with AI to provide a robust and adaptive mechanism for detecting ghost preambles in 5G networks. The solution integrates into existing 5G stacks in accordance with 3GPP 37.817, where the AI model can be enabled at an optimal integration point based on detection performance, computational requirements, and implementation complexity.

Figure 4. AI-powered ghost preamble detection – integration into 5G RAN based on 3GPP 37.817.GlobalLogic Solution Validation

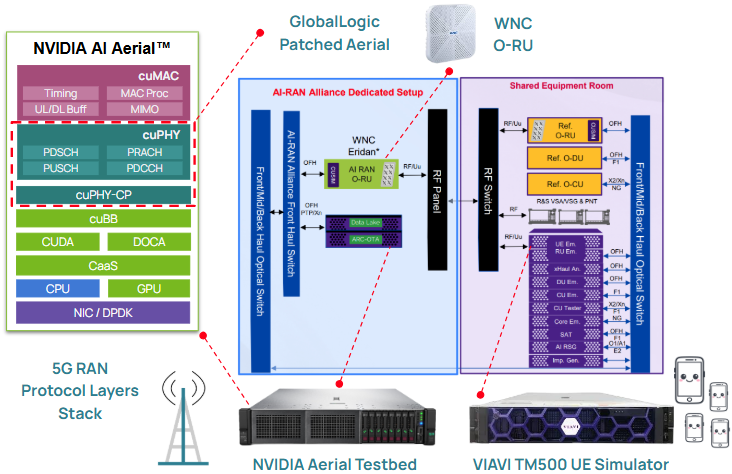

Lab Setup

GlobalLogic used an NVIDIA AI Aerial open‑source stack that includes cuPHY — an accelerated L1 implementation that runs on GPUs — and is designed to extend easily, making it an ideal foundation for AI‑based L1 enhancements. NVIDIA AI Aerial also provides MATLAB scripts for generating RAN data, including PRACH preambles, which can be adapted to produce the required training and validation datasets. In addition, NVIDIA AI Aerial integrates cleanly with TensorRT, a powerful high‑performance AI inference library, simplifying deployment of trained models.

GlobalLogic partnered with the VIAVI VALOR lab to validate our solution in different real-world conditions. The following setup was used:

Figure 5. VIAVI VALOR Lab setup.“Validating AI-driven RAN innovations requires a test environment that mirrors the complexity of the real world. By leveraging the VIAVI VALOR Lab, GlobalLogic was able to stress-test their ghost preamble detection framework against high-fidelity signal interference and diverse mobility scenarios, proving that AI can significantly move the needle on 5G spectral efficiency.” — Erik Probstfield, sr. Director VALOR, VIAVI Solutions

The testing was performed on the following configuration:

Table 1. Test configuration.

Parameter Configuration Band n78 Duplex mode TDD Subcarrier spacing 30 kHz Channel bandwidth 100 MHz TDD pattern DDDSU (5 ms frame) Preamble format B4 Antenna configuration 2 antennas Model Training and Validation Data

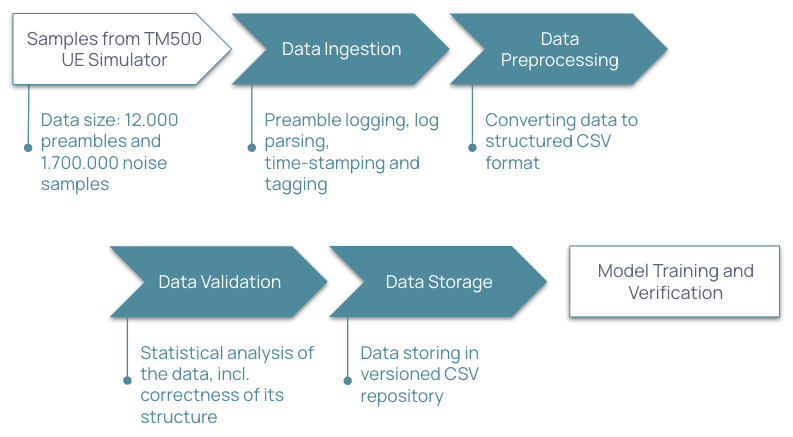

AI models require large, validated data sets to train the model and to validate it during training and inference on the physical gNodeB.

Figure 6. Data4AI Pipeline – How to train the modelStages

Data ingestion

- Preambles logged by instrumented code.

- VIAVI TM500 logs parsed.

- Timestamps recorded.

- Samples tagged as real or ghost preambles.

- Data saved in a structured format.

Data preprocessing

- Data converted to a structured CSV format.

Data validation

- Statistical analysis of the data.

- Visualization and inspection of the data for odd values, presence of all expected preamble classes, and SINR value reliability.

Data storage

- Data stored in a versioned repository in CSV format.

Data application for model training and verification

- The data set is split into two parts: 90% for training and 10% for validating.

Solution Validation and Benchmarking

The data source is a set of test cases designed and developed for this research. In each test case there is one or more UEs performing repeated attach and detach to the network in following conditions:

- Static with no noise.

- Static with various noise levels.

- Moving at various speeds in different noise conditions.

The result is a large set of preambles, both good quality and distorted by noise and doppler shift as well as a large set of various noise samples. A typical test session results in 17,000 preambles and 17,000 noise samples. The data is then divided 90% for training and 10% for verification.

Key Performance Indicators (KPIs) Achieved

The following KPIs were set for model inferencing:

- Ghost preamble detection rate: Percentage of signals classified as noise.

- Preamble detection rate: Percentage of signals classified as real preambles.

- Inference time [µs]: Overall PRACH processing time should not exceed 1ms.

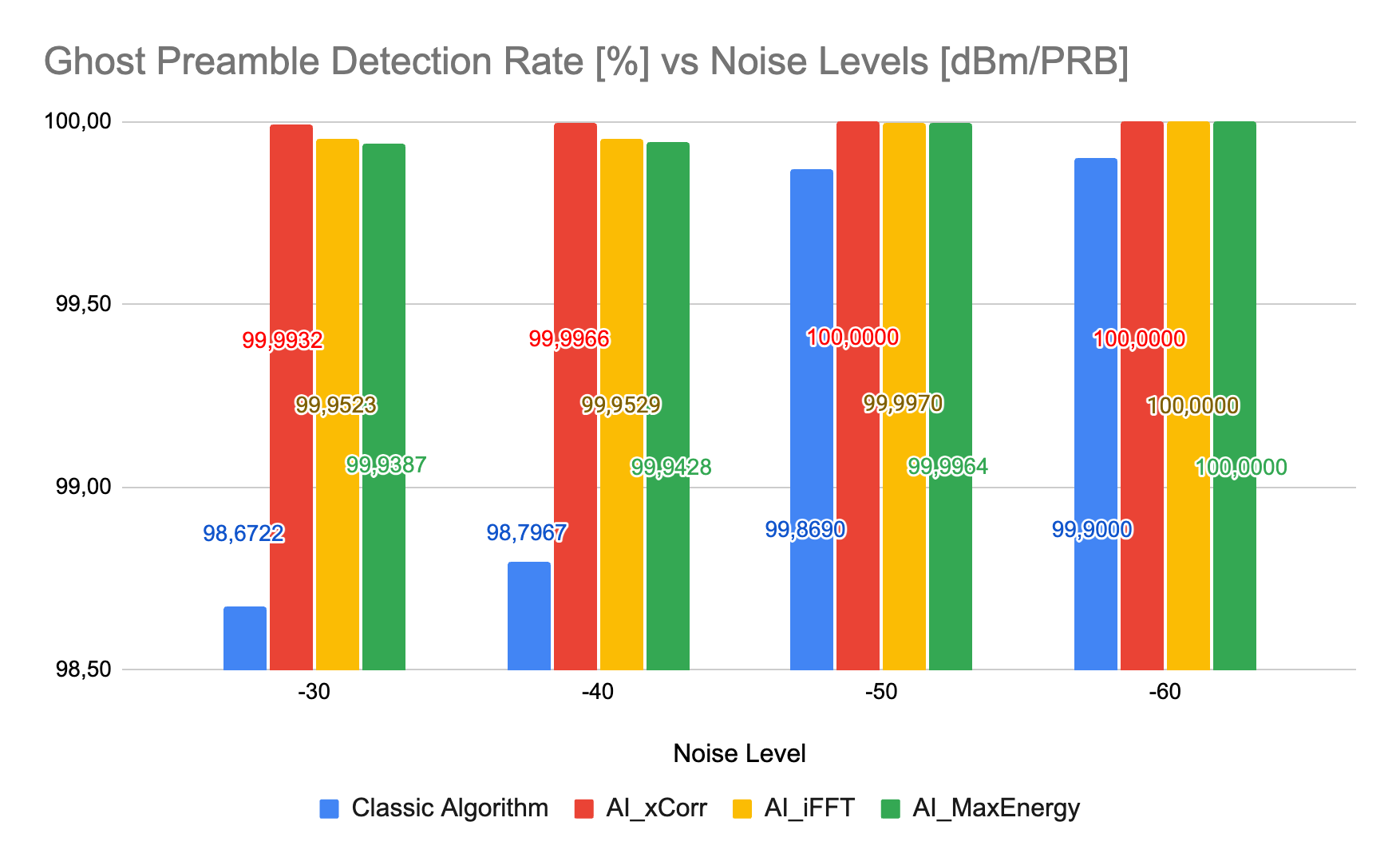

The results are provided for best performing models: AI_xCorr, AI_iFFT and AI_MaxEnergy.

Ghost Preamble Detection Rate

Figure 7. Ghost preamble detection rate: Classic algorithm vs AI models (AI_xCorr, AI_iFFT, AI_MaxEnergy) in challenging radio conditions

Figure 7. Ghost preamble detection rate: Classic algorithm vs AI models (AI_xCorr, AI_iFFT, AI_MaxEnergy) in challenging radio conditionsThe test demonstrated very good ghost preamble detection rates in challenging radio conditions. All AI models outperformed classic algorithms and maintained a near-constant rate of discarded ghost preambles at levels over 99.9% as a function of noise power across the full range of TM500 noise levels.

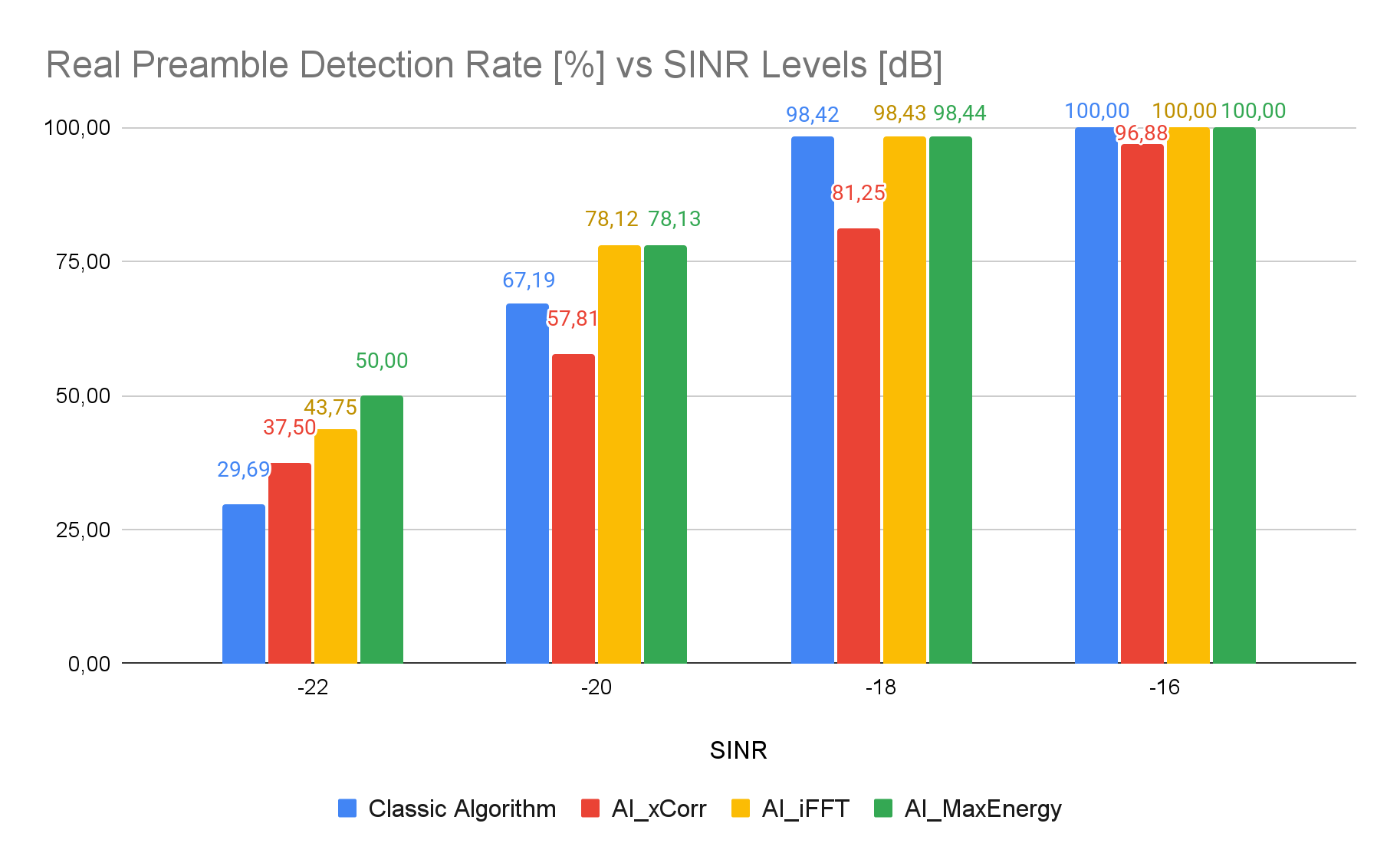

Real Preamble Detection Rate

Figure 8. Real preamble detection rate: Classic algorithm vs AI models (AI_xCorr, AI_iFFT, AI_MaxEnergy) in challenging radio conditions

Figure 8. Real preamble detection rate: Classic algorithm vs AI models (AI_xCorr, AI_iFFT, AI_MaxEnergy) in challenging radio conditionsThe test demonstrated satisfactory real preamble detection rates in challenging radio conditions. The performance of AI models remained consistent with classic algorithm. Only the AI_xCorr model showed more pronounced degradation. This can likely be explained by its sensitivity to the Doppler effect, timing delays, and other non-linear factors. Other models achieved over 99% performance at an SINR level of up to -16.5dB, while AI_iFFT and AI_MaxEnergy models outperformed classic algorithms at lower SINR levels.

Inference time

Finally, the measurement of inference mean times for different AI models has confirmed that an AI-powered solution meets the time constraints of the PRACH processing pipeline.

Table 3. AI models inference time.

AI Model AI_xCorr AI_iFFT AI_MaxEnergy Inference of TM500 samples, mean time 101 µs 94 µs 92 µs Benefits to the Network

Impact on throughput

Ghost preambles impact many physical channels in 5G networks, causing gNodeB to waste unnecessary resources for MSG2 and then MSG3 — affecting PDSCH, PUSCH and PDCCH channels. PUSCH is impacted the most due to limited resources in the standard TDD pattern. The issue scale increases in noisy environments.

Selection of optimal preamble formats can partly mitigate ghost preambles, while still introducing a trade-off between PRACH reliability and the overhead of UL symbols, as symbols allocated for PRACH cannot be utilized for PUSCH transmission. Different preamble formats offer varying levels of processing gain – for instance, format B4 utilizes 12 symbols, providing approximately 10.8 dB of processing gain, while format C0 utilizes only a single symbol, resulting in 0 dB gain.

The presence of ghost preambles leads to throughput degradation. Uplink throughput measurement data for preamble format B4 demonstrates how intelligent ghost preamble detection increases performance. For different bandwidths, the following estimated improvements can be achieved:

Table 4. Potential uplink throughput benefit with AI-powered GPD.

Bandwidth [MHz] UL throughput for classic solution affected by ghost preamble occurrence* [Mbit/s] Potential maximal UL throughput with AI-powered ghost preamble detection [Mbit/s]

Throughput benefit [%] 10 8.27 10.89 24.10% 20 21.78 24.40 10.76% 40 49.31 51.93 5.05% 80 104.88 107.50 2.44% 100 132.92 135.54 1.94% In the worst-case scenario — low detection threshold combined with high noise — ghost preambles are likely to occur in most PRACH occasions. Consequently, the maximum degradation can reach 24.1% for a 10MHz cell. It means that maximum benefit can be achieved in narrowband scenarios, for example, low-band 5G deployments focused on coverage, where such improvement is critical and can be a decisive factor when ensuring Quality of Service (QoS).

In turn, transitioning from B4 to C0 preamble format with classic algorithms can provide an additional throughput increase of 2.3 Mbps by reducing the number of PRACH symbols from 12 to 1. However, the results of applying GlobalLogic’s AI-based ghost filtering show that an even higher gain of 2.63 Mbps can be achieved while still staying with the most noise-robust B4 format. This means that the use of AI allows operators to maintain B4 format reliability while improving throughput. With 12 symbols and 10.8 dB processing gain provided by B4 preamble format, excellent network accessibility is preserved even for distant UEs, securing the best possible coverage. AI effectively cleans the channel from erroneous MSG2 and MSG3 scheduling caused by ghost preambles, resulting in higher effective data rates than those achievable by simply switching to the shorter C0 format. In theory, it is also possible to apply the AI model to detect ghost preambles when using the C0 format, which could lead to even greater throughput gains in certain conditions.

The above means improved network performance and lower operating expenditure (OPEX) costs for mobile operators through better spectrum utilization.

Impact on coverage

Optimizing preamble detection threshold is another challenge. Changing this limit represents a critical trade-off between ensuring reliable network access and mitigating ghost preamble phenomena:

- Setting the limit too low to keep cell coverage better means the base will start processing noise as efficient signals – that’s where ghost preambles appear.

- Setting this limit too high means reducing the efficient coverage, while reducing the impact of ghost preambles.

It is quite challenging for cell planners to configure the right cell access power level threshold. Typically, Network Equipment OEMs target a false alarm rate on the PRACH channel between 0.01% and 0.1%, which is achieved with certain values of this threshold implemented in the proprietary algorithms. Consequently, UEs with the uplink signal level falling below this threshold are not allowed to access the cell and the base station will not process it. Improving the ghost detection ratio with AI-powered ghost preamble detection allows minimized detection threshold while still mitigating ghost preambles and associated loss of coverage with existing configurations.

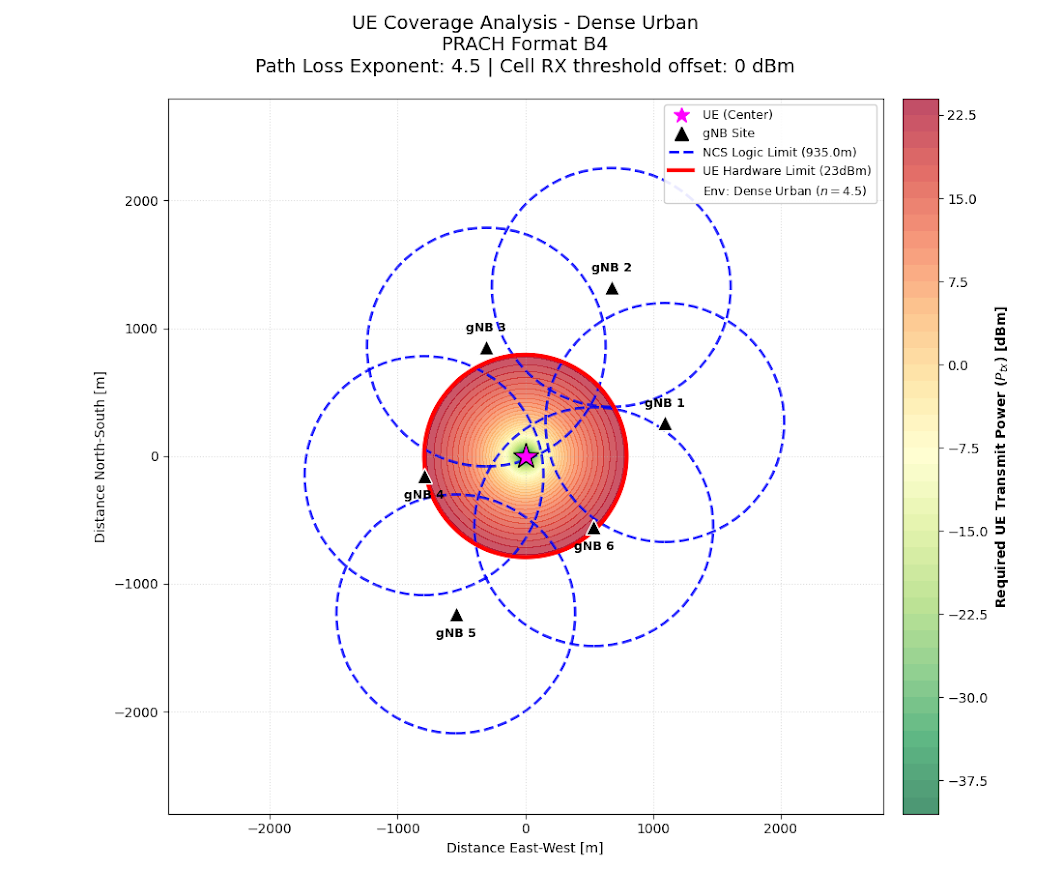

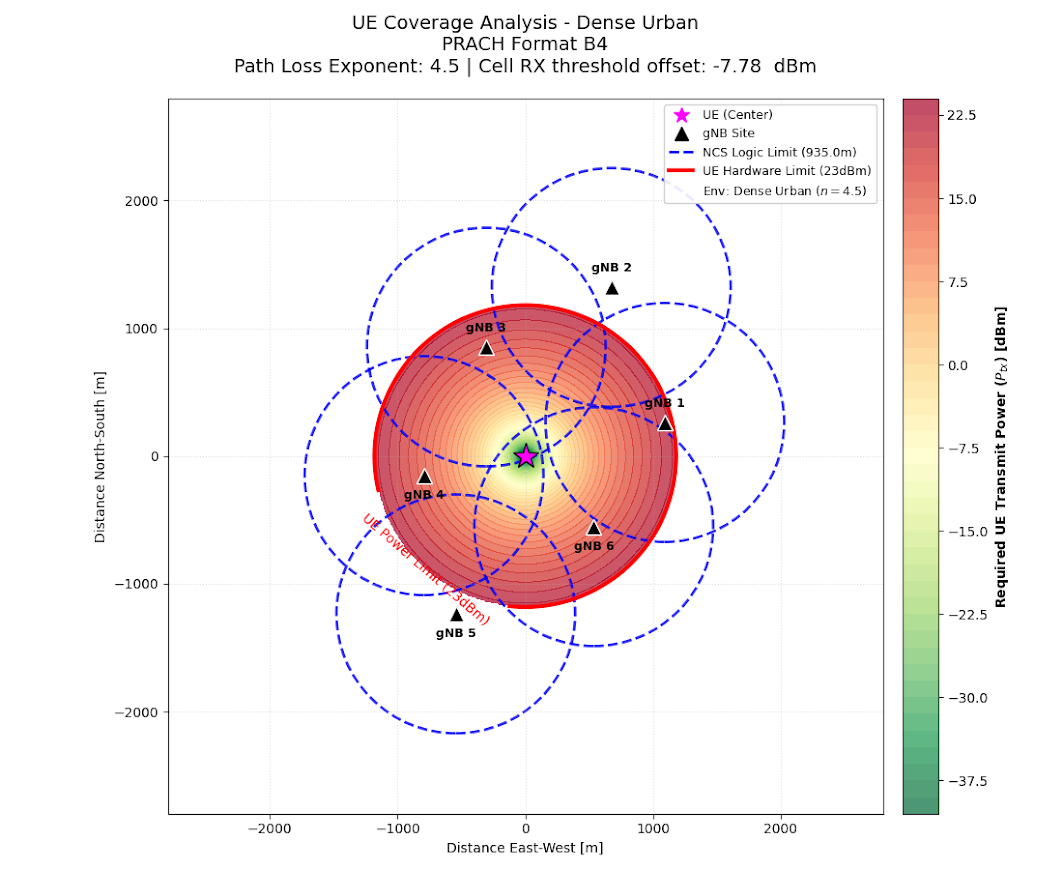

In this case, receiver performance was estimated by lowering the detection threshold from 0 dBm to -7.78 dBm. The results show that classical detection methods lose their ability to distinguish real signals from ghost preambles below a certain power level. At the lowest sensitivity, ghost preamble detection degrades significantly. Based on the test results the coverage improvement can be estimated.

Table 5. Potential cell uplink range gain with AI-powered GPD.

Cell RX threshold offset, [dBm] GPD rate, [% ](classic algorithm)

AI-Powered GPD rate [%]

Cell UL range gain with AI-powered GPD [m]

Cell UL range gain with AI-powered GPD [%]

0 <=100 100 0 0 -2.4 <=90 99+ +72,5 +15,1 -3.8 <=80 99+ +121,0 +25,2 -7.78 <=60 99+ +279,5 +58,2 Even at the highest negative offset of -7.78 dBm, where the classical algorithm loses its efficiency in a noisy urban environment, the AI model still detects ghost preambles with 99%+ accuracy. AI makes it possible to operate at this level while maintaining stability, providing link budget gain of 7.78 dB resulting in a 58.2% increase in coverage.

Critically, an additional 279.5 meters of cell range in urban environments can significantly reduce the number of base stations required to cover the same area, directly impacting the operator’s capital expenditures (CAPEX).

The diagrams below illustrate potential gain for the typical N78 cell after altering the threshold.

Figure 8. Cell uplink range improvement when changing Cell RX Threshold Offset from 0 dBm (left) to -7.78 dBm (right)Key Takeaways

AI‑powered ghost preamble detection delivers meaningful advantages for both Network Equipment OEMs and mobile operators.

For Network OEMs, integrating deep learning–based detection into the PRACH pipeline strengthens competitive positioning with smarter, AI‑driven RAN features that enhance random‑access performance, resolve a key reliability issue in dense or noisy environments, and support premium software offerings. This capability accelerates the shift toward AI‑native RAN architectures and establishes a foundation for future 6G implementations.

For mobile operators, accurate preamble classification preserves uplink resources, reduces signaling, and improves spectrum utilization. At scale these efficiencies translate into lower operational costs and stronger network performance, particularly in challenging radio conditions.

By safely minimizing the cell access threshold, GlobalLogic’s approach delivers substantial, validated gains: peak uplink throughput increases of up to 24.1% (2.63 Mbps per cell) and expanded effective cell coverage of up to 58.2%. The combined impact is a more reliable, higher‑quality network with reduced OPEX and CAPEX. These measurable improvements reinforce three strategic takeaways for the industry:

- Intelligence at the edge: Immediate, quantifiable gains in throughput, coverage, and spectral efficiency serve as the instrumental foundation for the industry’s transition toward AI-native 6G.

- Simplified deployment: NVIDIA’s advanced AI-RAN architectures and VIAVI’s rigorous validation environments ensure that engineering, testing, and deployment of complex AI models are streamlined and risk‑mitigated.

A strategic choice for 6G: The fusion of GlobalLogic’s AI‑RAN engineering with NVIDIA and VIAVI technologies offers a scalable, intelligent framework capable of meeting the extreme performance demands of next‑generation networks.

Learn more

Reserve a demo of the ghost preamble detection model at booth 5B18 (Hall 5) at MWC Barcelona or contact us for a consultation.

Loading...

How can I help you?

How can I help you?

Hi there — how can I assist you today?

Explore our services, industries, career opportunities, and more.

Powered by Gemini. GenAI responses may be inaccurate—please verify. By using this chat, you agree to GlobalLogic's Terms of Service and Privacy Policy.