Blogs

Blogs

Blogs

Blogs

GlobalLogic

22 February 2026

If You Build Products, You Should Be Using Digital Twins

Digital twins are the foundation of modern product ...

Blogs

Blogs

Blogs

GlobalLogic

18 December 2025

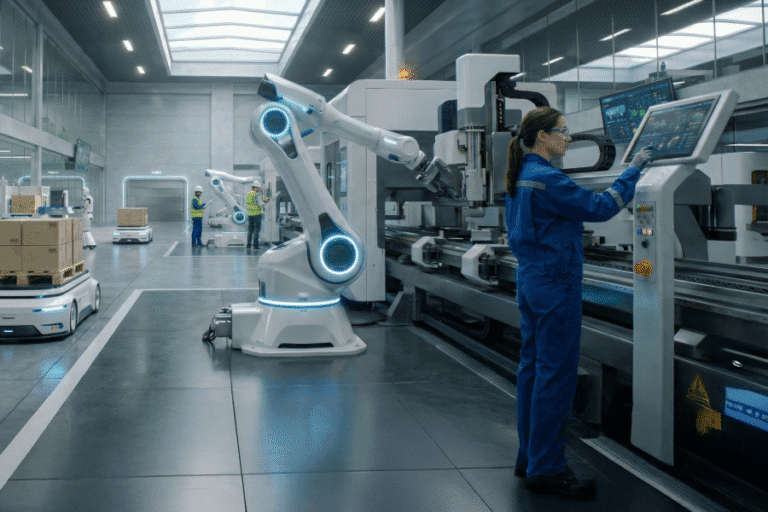

Physical AI: Bringing Intelligence to the Edge of Action

At GlobalLogic, we’re building systems that don’t just ...

Blogs

Blogs

Blogs

Loading...

Hi there — how can I assist you today?

Explore our services, industries, career opportunities, and more.

Powered by Gemini. GenAI responses may be inaccurate—please verify. By using this chat, you agree to GlobalLogic's Terms of Service and Privacy Policy.