Let's start engineering impact together

GlobalLogic provides unique experience and expertise at the intersection of data, design, and engineering.

Get in touch

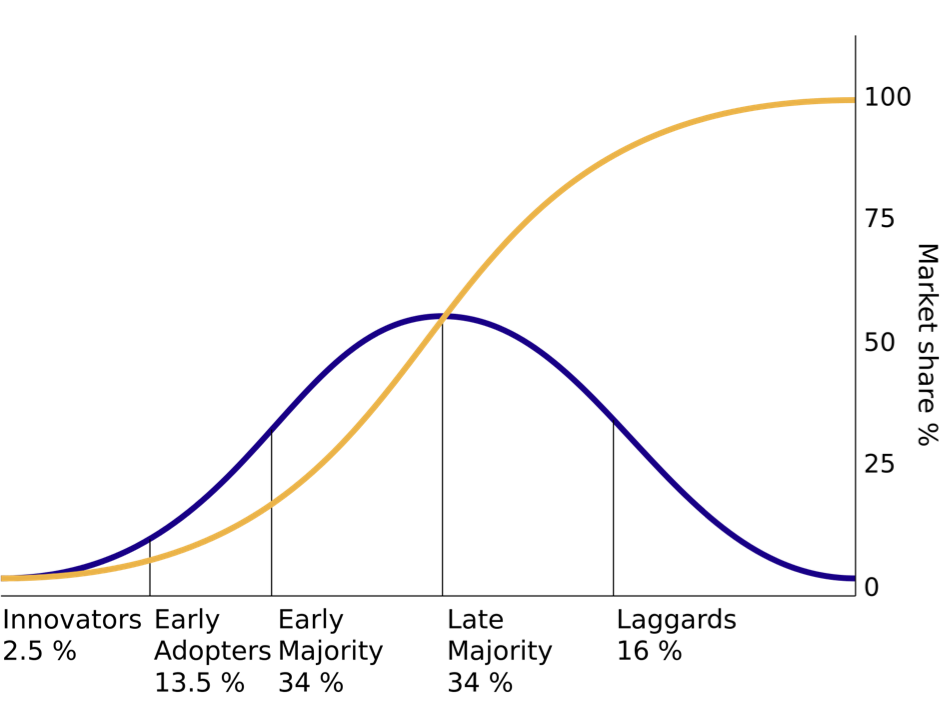

Fig 1: Diagram based on E. Rogers’ “Diffusion of Innovations,” 1962. Courtesy of Wikimedia Commons.

For many companies who depend on technology, the pragmatic “sweet spot” on the technology adoption curve lies somewhere between the early majority and late majority. By the time a technology begins to be adopted by the early majority, many of its initial challenges have been overcome by the innovators and early adopters. The benefits of that technology can now be realized without the pain those pioneers had to go through. Also, a substantial community of companies and developers are in the same position, so resources, training, tools, and support start to become widely available. Also, the technology is new enough that the best engineers and architects will be excited to learn and work with it—it’s a motivator to attract talent.

This assumes, of course, that the new technology delivers benefits. But, generally, if it “crosses the chasm” and gets to the early majority phase, that has already been soundly proven. For example, digital natives like Amazon, Google, and Facebook were early adopters of a variety of then-new technologies. Their risk—and success—subsequently paved the way for the vast majority of companies that now follow in their shoes.

Most technology-enabled businesses can survive and thrive with technology that is one generation—or even two—behind the technology being used by the early adopters. Once a technology becomes older than that, though, lots of problems come up:

- It becomes harder to attract and retain good talent.

- System uptime, stability, and scalability become less competitive relative to more modern systems.

- The user experience and overall system quality suffers; security threats cannot be readily countered.

- The availability of good technology options produced by other companies and the open source community become less abundant.

Companies whose technologies fall into Professor Roger’s “laggard” category will generally experience these issues first-hand, whether or not they recognize that their technology is the cause.

By nature, the specific technologies that fall into each category are moving targets, and meaningful market adoption statistics are hard to come by. Forbes reported in 2018 that 77% of enterprises have a portion of their infrastructure on the cloud, or have at least one cloud-deployed application[1]. This figure resonates with our own experience, but it still does not tell us what percentage of new revenue-generating applications are created using cloud-native / mobile-first architectures, or how aggressively businesses are migrating to the cloud. Our experience suggests “nearly all” and “it varies,” respectively.

Classifying Technology from a Practitioner’s Perspective

To provide a practitioner’s perspective on technology adoption, we decided to create a classification based on our own experience with clients, partners and prospects. Collectively, because of our business model, this set of companies cuts a wide swath through the software product development community, including startups, ISVs, SaaS companies, and enterprises. Because GlobalLogic’s business focus is on the development of revenue-producing software products, the enterprises we work with generally either:

- Already use software to produce direct / indirect revenue

- Recognize that software has become a key component of their other revenue-generating products and services

- Are more conventional but aspire to be more software-centric

- Are “laggards” (usually recently acquired technologies and companies whose technologies are in need a refresh)

So what trends are we seeing in the technologies used by this diverse group of software-producing companies? Before we start, please note that the classifications we make here are about the technologies, not the companies. Even the most innovative company probably has some “laggard” technologies deployed. Similarly, even very conservative companies may be early adopters in some areas. For example, some otherwise ultra-conservative banking software companies are incorporating cryptocurrency support.

As of late 2019, we categorize the various technologies currently in use among our partners, prospects and client-base into five categories: innovators, early adopters, early majority, late majority, laggards. (If you find the term “laggard” offensive, please note that we use it because it is sociologist Everett Roger’s term, not ours. It should be read as only applying to the technology, not the company or the people.)

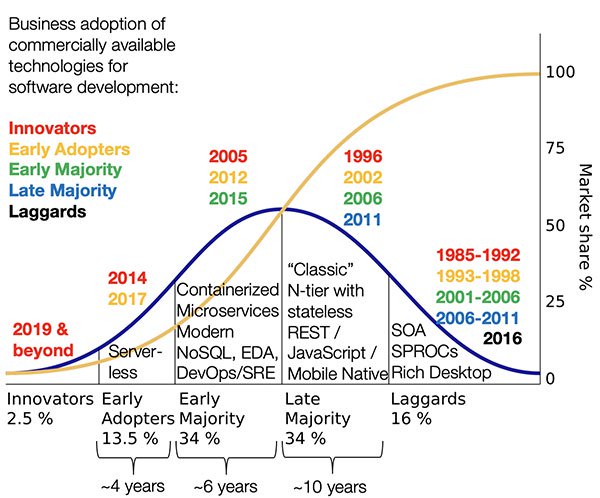

Fig 2: Technology Adoption Picture in Late 2019

Innovators

Relatively few of the innovator technologies currently under investigation or in use by innovators ever reach the early adopter stage in their current form, but the work done by innovators informs the early adopters and the entire ecosystem.

Late 2019 innovator technologies include:

Quantum computing

Strong AI (that is, systems that “think” like people)

Network-distributed serverless

GlobalLogic has the same curiosity about these types of technologies as other engineering-focused companies tend to have. However, these technologies are primarily in the research phase and not the revenue-generating phase, so our commercial engagements here tend to be limited.

Early Adopters

Technology in this bucket has widespread availability, but it is either in a relatively rough state that has not been fully productized, or else is an obviously good technology that is still looking for the right opportunity to go mainstream. While a substantial number of these technologies will eventually be taken up by the early and late majority in some form, the timing of this event—as well as the specific winners and losers—have not yet become clear. For example, at this writing, cryptographically secure distributed ledgers will clearly become mainstream at some point. However, will blockchain specifically be the big winner? Out of such bets, fortunes are made or lost.

Early adopters need to invest time and energy to find and then fill the gaps and missing pieces of an incompletely productized technology. However, the rewards of using early adopter technology can be very large if they address a genuine need that can’t be readily solved using other approaches. For example, bitcoin as an early adopter of cryptographically secure distributed ledger technology has paid off big for many people, though arguably this technology has yet to become mainstream for business applications.

Within our client community, current early adopter technologies include:

Serverless functions or “Function as a Service” (FaaS)

Serverless containers

Blockchain

Deep learning (different than strong AI)

Computer vision

AR/VR (outside of gaming and entertainment)

Gestural interfaces

We actually have clients using all of these technologies commercially today. For example, we work on public safety systems and autonomous vehicles that use computer vision and deep learning. However, these applications fall into the early adopter / risk taker / first mover / niche application category, rather than what could be considered mainstream business applications. We, along with many others, firmly believe that many of these technologies are rapidly maturing and that they will indeed enter the mainstream in the next few years. But as of right now, we could not claim that they have become part of the mainstream today.

Early Majority

Technology entering the “early majority” bucket is initially a little scary to the mainstream, but it has been thoroughly worked over by the early adopters (and the even earlier entrants into the early majority) and tested in real-life deployments. The blank spaces, boundaries, and rough spots have been largely filled in, and the technology has now been “productized.” Tools and support are available, together with experienced personnel. For many businesses, this is the sweet spot for new product development: early enough to give you a meaningful competitive edge and to be attractive to talented engineers, but not so early that you need to invest the time and energy required to be a pioneer. Early majority technologies also have the longest useful life, since they are just entering the mainstream adoption phase.

Right now among our customer, prospect, and partner base, we see the early majority adopting containerized cloud-native event-driven microservices architectures and fully automated CICD deployment and “Infrastructure as Code.” We saw this trend starting back in 2015 among our mainstream-focused clients.

Enterprises who are developing new systems or extending older ones are widely adopting:

Modern NoSQL databases (as opposed to ancient versions of NoSQL)

Event-driven architectures (microservices-based and otherwise)

Near real-time stream processing

DevOps / CICD / “Infrastructure as Code” / System Reliability Engineering

Containerized cloud-native microservices architectures

On the user experience front, we are beginning to see a significant uptick in mainstream clients who are interested in dynamically extensible “micro front-end” architectures.

Late Majority

Revenue-generating software systems generally age into the late majority. They tend to be created using technologies that were early majority when the system was built, but time has gone by, and those same technologies now fall into the late majority category. This applies to any company that expects to make money from the software—either by selling it (in the case of an ISV or SaaS company), or as part of a product or service (e.g., a car or medical device).

For enterprise-developed non-revenue generating applications (e.g., internal back office or employee-facing systems), the situation is somewhat different. Because cost control and low-cost resource availability are primary drivers, internal-use applications are often developed using lower-cost technologies that are now in the late majority stage. Late majority technologies also enables the use of less expensive resources who may not be skilled in early majority technologies.

As an aside, this attitude toward late majority technologies is one reason for the dichotomy between IT-focused organizations and product-focused organizations—both within a given enterprise—and in the services businesses that support them. Product-driven organizations and services businesses tend to be skilled in developing systems using early adopter and early majority technologies. IT-focused organizations focus on sustaining systems that use late majority and sometimes laggard technologies. This is obviously an oversimplification, as both product- and IT-focused organizations can certainly be skilled in the full range of technology options. However product- and IT-focused companies tend to have different attitudes, approaches, and “DNA” with respect to the different stages of technology maturity.

For revenue-generating applications, while development cost is always a factor, time-to-market, competitive advantage, and the overall useful life of the resulting product are generally more important than cost alone. This desire to maximize the upside potential generally drives new revenue-producing app development toward early majority technologies, while non-customer-facing / non-revenue producing / internal-facing applications tend to use late majority technologies to save money.

As of late 2019, the predominant late majority architectural approach is:

A true N-tier cloud-deployed layered architecture, supporting stateless REST APIs and JavaScript Web / mobile native clients

RDBMS-centric systems using object-relational mappings (ORMs)

Good implementations of these architectures have strong boundaries between layers exhibiting good separation of concerns, are well componentized internally, and may be cloud-deployed. This is a good, familiar paradigm, and we expect elements of it to persist for some years (e.g., the strong separation between client and “server” through a well-defined stateless interface). However, even the best implementations of the N-tier architecture lack the fine-grained scalability that you can get with early majority microservices technology. Many implementations of this paradigm also tend to be built around a large central database, which itself limits the degree to which the system can scale in a distributed, cloud-native environment.

If history is any indication (and it usually is) we believe the majority will—perhaps reluctantly—leave the N-tier paradigm behind in favor of a cloud-native microservices approach within the next several years.

Laggards

Given its negative connotations, we would really prefer not to use this term. Let’s keep in mind, however, that the term was introduced by Professor Rogers to refer to specific technologies, not the company or the people who work with them.

Laggard technologies are those that are not used to any significant degree for development of new software systems today, either for revenue-producing products or for internal-use systems. Time has passed these technologies by, literally, and they have been superseded by other technologies that the vast majority recognize as being superior (at least 84% according to the curve).

People use laggard technologies only because they have to. Systems based on laggard technologies are still in production, and these systems must be actively enhanced and maintained. Within a given organization, these activities require creating a pool of resources who have knowledge of the laggard technologies. The proliferation of these niche skillsets within the company can drive the creation of new systems using the same laggard technologies, even when better options have become widely available.

For technologies in the laggard category, multiple generations of improved technologies and architectural approaches have, by definition, now become available. In general, these improvements make development easier and faster, scalability and reliability higher, user experience better, and operations cheaper. Nonetheless, companies can find themselves locked into laggard technologies because that is the skillset of their workers. Getting out of this bind is disruptive, and “digital disruption” has become a frequent refrain in the industry.

Current technologies that fall in the laggard category include:

Microsoft Access style “two-tier” (real or effective) client / server architectures with tightly coupled UIs and logic in the database (SPROCs, etc.)

Stateful / session-centric web applications

Conventional “SOA” / SOAP architectures

“Rich client” systems (Siverlight, Flash / Flex, and many but not all desktop systems)

Legacy mainframe-centric systems

In general, any technology that was used by early adopters 20 years ago or longer is a candidate for the laggard bucket.

Conclusion

Technology stays current for a surprisingly long time. Specifically, some major technologies have stayed in the “majority” category (early and late) for about 16 years and, in a few rare cases, even longer. That’s enough time to raise a child from birth to high school. But, as those of us who have raised children know, while time may seem to stand still day-to-day, looking back it passes by in the blink of an eye.

On the technology front, tiered architectures with REST APIs may still seem modern and current—but in fact, the early adopters were using them in 2002, and they became mainstream in 2006. If past history is any indication, N-tier architectures will enter the “laggard” category by 2022.

Like Cleopatra—of whom Shakespeare’s character said “age cannot wither”—not all technology ages at the same rate. Some technologies that have reached, or nearly reached, the 20+ year mark while remaining vital include:

- The stateless REST interface paradigm

- HTML/CSS/JavaScript web applications

- Modern NoSQL

- Wi-Fi

- Texting (SMS)

- Apple’s OS/X operating system (originally NeXTStep)

However, where technologies are concerned, remaining relevant in old age is the exception, not the rule. The technologies and paradigms that have stayed current have not remained static; they have evolved continuously since their early beginning. Good systems do the same—generally by steadily incorporating “early majority” and “early adopter” technologies to keep themselves fresh.

[1] https://www.forbes.com/sites/louiscolumbus/2018/08/30/state-of-enterprise-cloud-computing-2018/#24d16798265e

FAQs

Innovators are the first to adopt new technologies, while laggards are the last, often resistant to change.

Reasons include limited resources, risk aversion, lack of awareness, or satisfaction with current technologies.

Early adopters test and validate new technologies, influencing wider acceptance and adaptation in the market.

They are crucial for mainstream acceptance, bridging the gap between early adopters and the late majority.

By investing in R&D, staying informed about trends, fostering a culture of innovation, and being willing to take calculated risks.